FOSDEM talks and emulation

My latest talks and project updates

October 16, 2022

In 2019 I gave a talk at FOSDEM in Brussels on the "music portal", a device I built for my children as a toy that plays music. I wrote about the first version in awk here. The talk revolved around the specifics of the Go language and how it was used to industrialize a prototype into reliable appliance with gokrazy.

In 2021, I started working on an emulator; it's one of those things I always wanted to do, and it finally seemed simple enough, with all the experience I had, from writing small assembly bots, improving the ESIL emulator (which relied on the capstone disassembler), or working with qemu in my previous day job. I picked a platform from my childhood, and started typing during my summer break.

I did not finish the emulator, but I had a somewhat working Z80 CPU in the end, and learned a lot from it. So I decided to share what I learned, but this time not with the focus on my code (there are many such emulators, many are very good), but on this CPU architecture, the Z80, and some secrets that were discovered 30 years after its release.

I submitted a proposal to the FOSDEM Emulation devroom, and it was accepted. You can read the slides and watch my 2022 talk on Z80's last secrets here, and the discussion that ensued that is almost as long ! I had bad networking then (and mic settings), so I'm sorry about the quality of the Q&A.

My talk did not go into all the details of the Z80. For example, I had missed the gate-level reverse engineered simulators z80explorer and visualz80remix, and that should help with MEMPTR and other implementation details. I also discovered after the talk, a discord in which emulator developers are discussing even more recently-discovered secrets. But adding content to the talk would have made it even more specialized and complex. Maybe for a future talk ?

In the Q&A, I was asked if I preferred Go (since I gave a talk on a Go project 3 years earlier), or Rust (in which gears is written). I have been learning Rust for some time, and I still don't know how to answer this question. Go is definitely a simpler language, even though it has some surprising quirks. Rust is more complex but what you learn upfront reduces surprises later. Both can be very useful, and even have some overlap in functionality; I'll defer to John Arundel's Rust vs Go for more details. (fun story: I started learning Go 9 years ago, and am now writing some professionally; maybe in 5 years I'll write Rust for money ? Although I suspect it might come earlier…)

Emulator progress

This 2022 summer I started working again on the emulator, wanting to tackle at least the display side (VDP) of the Game Gear. But in the process I discovered that I had forgotten to wire the interrupt emulation properly (not needed for the test suites with no devices). I also took some time to properly implement memory banking for Sega cartridges, unused in the ZX Spectrum tests. I still found and fixed CPU bugs in my "complete" Z80 CPU emulator. There are other CPU issues which I'm not sure how to fix yet, but that shouldn't be an issue for basic emulation.

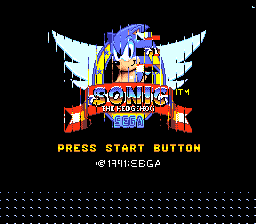

I initially dumped the VDP display state into an image to debug if I understood correctly the way the background and sprites were drawn. Here are three images of the splash screen for Sonic The Hedgehog, as I fix bugs in the implementation:

First render of the Sega splash screen with buggy code. |

Render of the Sonic Press Start screen with same buggy code. |

Render of the Sega splash screen after some bugfixes |

Render of the Press Start screen after the same bugfixes. |

Fixed Sega splash screen render with proper sprite offsets. |

Fixed Press Start screen render with proper sprite offsets. |

Note that this is the full VDP buffer, the LCD display area is smaller in the center; this is part of the things that aren't implemented yet !

I wired this "debug" view into a window that shows a pixel buffer, and right now it seems to work properly since my display is at 60fps. There are still many things to do but it's very encouraging that it's showing something !

This article →